Agentic Workflow Design

Prior Authorization LLM Copilot

Concept project grounded in real clinical workflow experience

Year :

AI Product Designer

Industry :

Healthcare

Client :

Figma, Claude, Perplexity

Project Duration :

6 Weeks

The problem no one was talking about

A physician writes a prescription. For many treatments: biologics, specialty drugs, complex procedures, that’s not the end of the story. Before the patient can actually receive the therapy, the insurer needs to sign off. This step is called

Prior Authorization: A check to confirm the treatment meets clinical criteria.

On paper, it sounds reasonable. In practice, it rarely feels that way. Behind the scenes, clinical support staff prior authorization specialists, nurses, medical assistants take over. They pull information from the patient’s chart, interpret payer-specific guidelines, and re-enter the same details into different submission portals, each with its own format and expectations.

The work is repetitive, but the stakes are high. Miss one required detail, and the request gets denied. Again start over.Every payer defines “complete” differently. And for higher-cost therapies, a single submission can take anywhere from 30 minutes to well over an hour.

The real bottleneck isn’t documentation. It’s translation.

Translating clinical data into payer-specific requirements. Mapping chart notes to structured fields. Framing a justification in a way that aligns with how each payer evaluates requests.

That’s where time disappears. And where small, costly errors creep in.

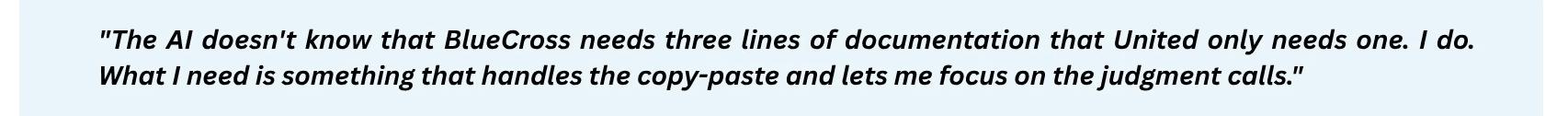

When an LLM was introduced to help, it seemed promising at first. It produced clean, confident summaries of the patient’s case. But it often missed payer-specific requirements. And because the output sounded complete, those gaps were hard to spot. Staff couldn’t rely on it, so they checked everything, line by line.

The AI didn’t remove work. It added another layer of review.

Which led to a more fundamental question:

What does trustworthy LLM assistance look like in a workflow where completeness and correctness aren’t optional?

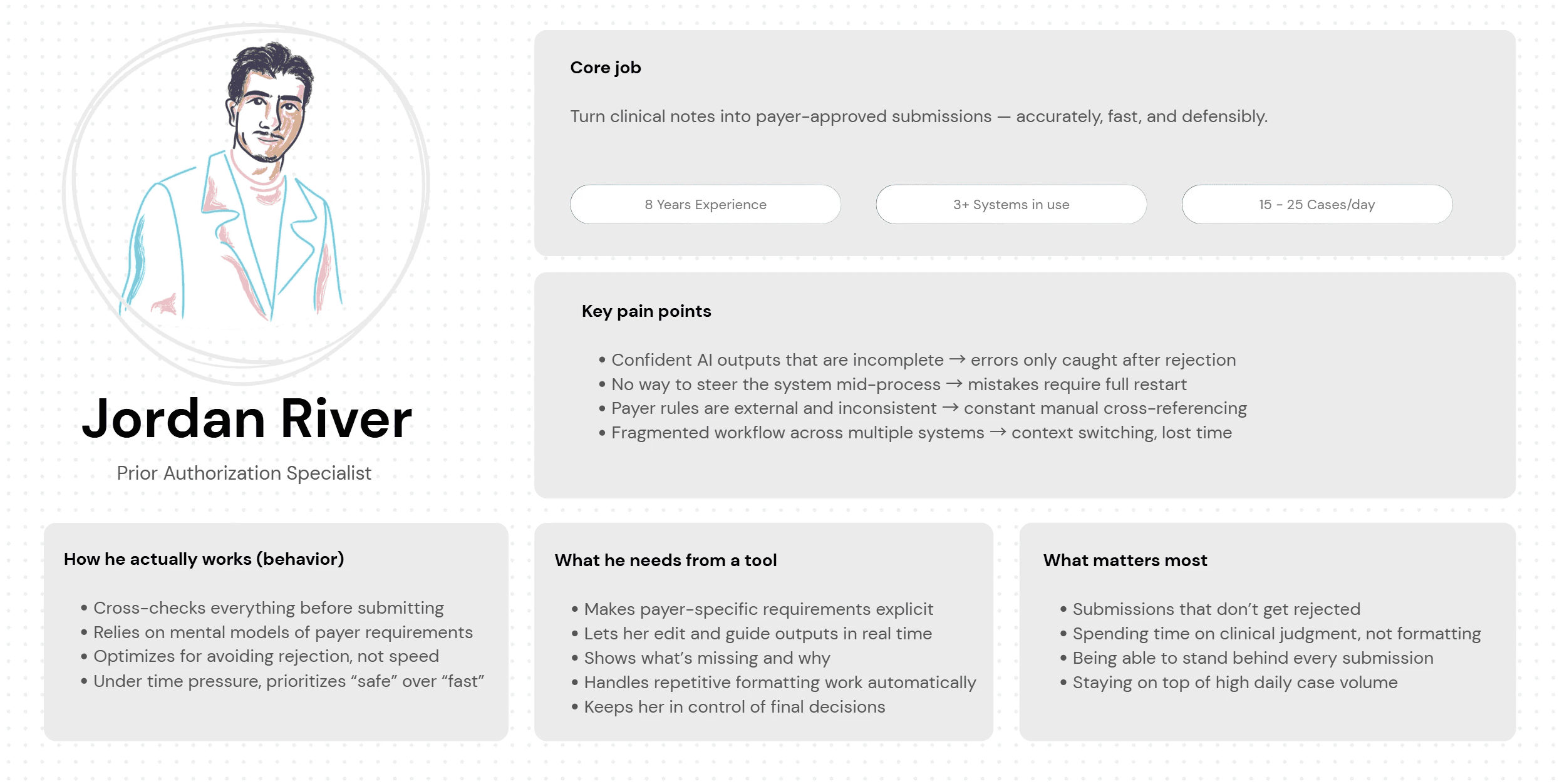

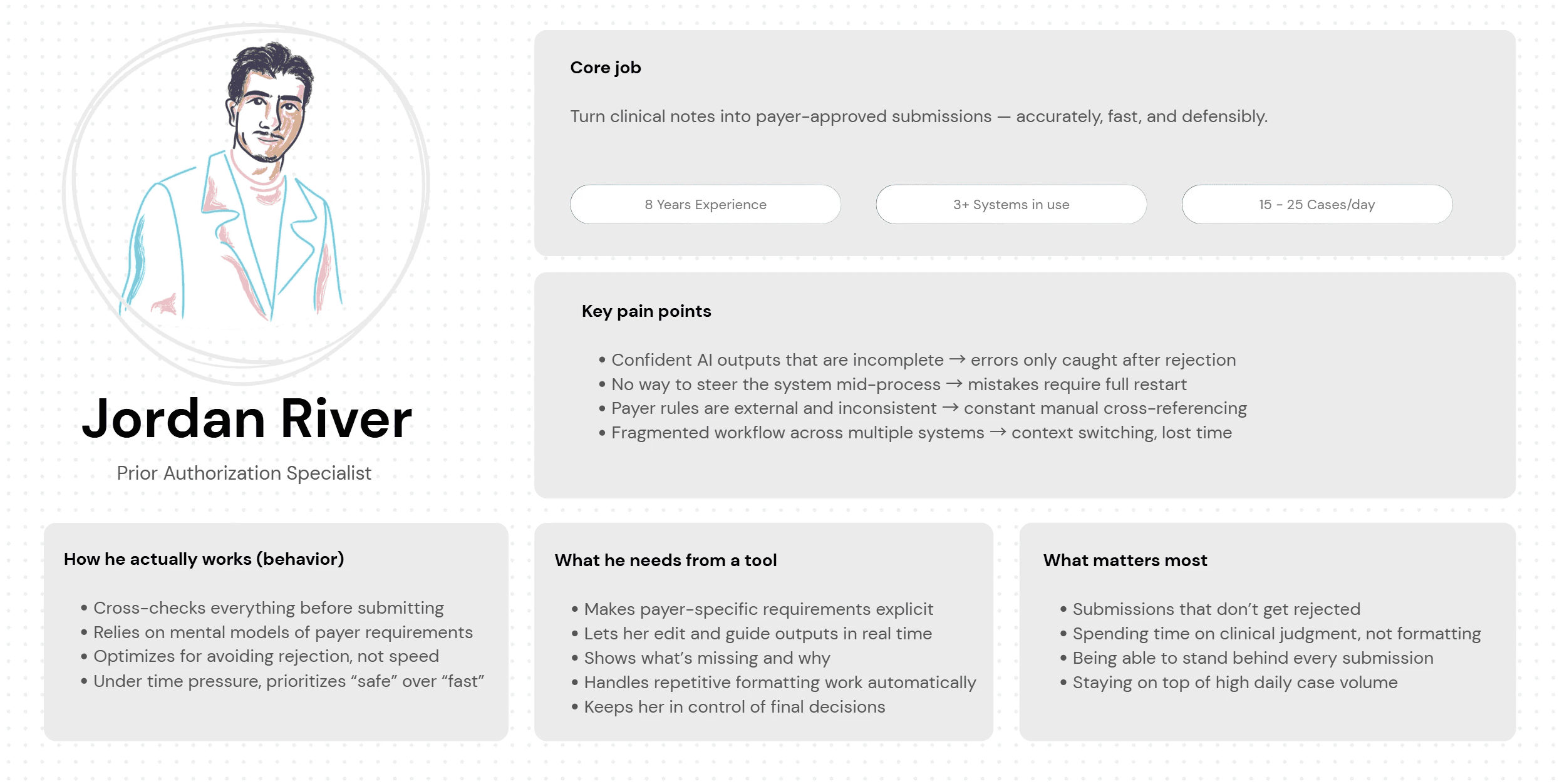

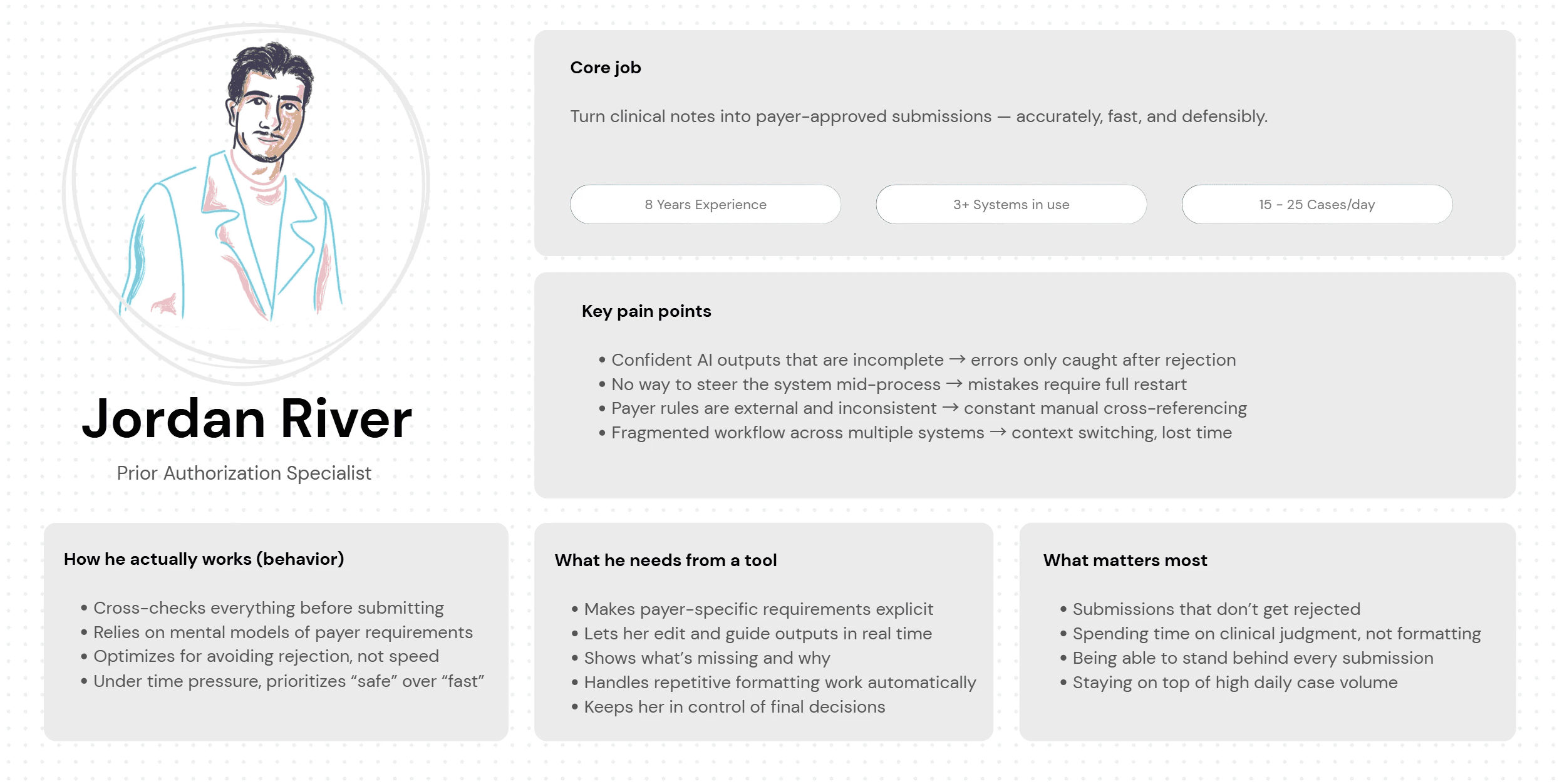

The people doing the work

Before any design happened, I needed to understand who actually lived inside this workflow every day.

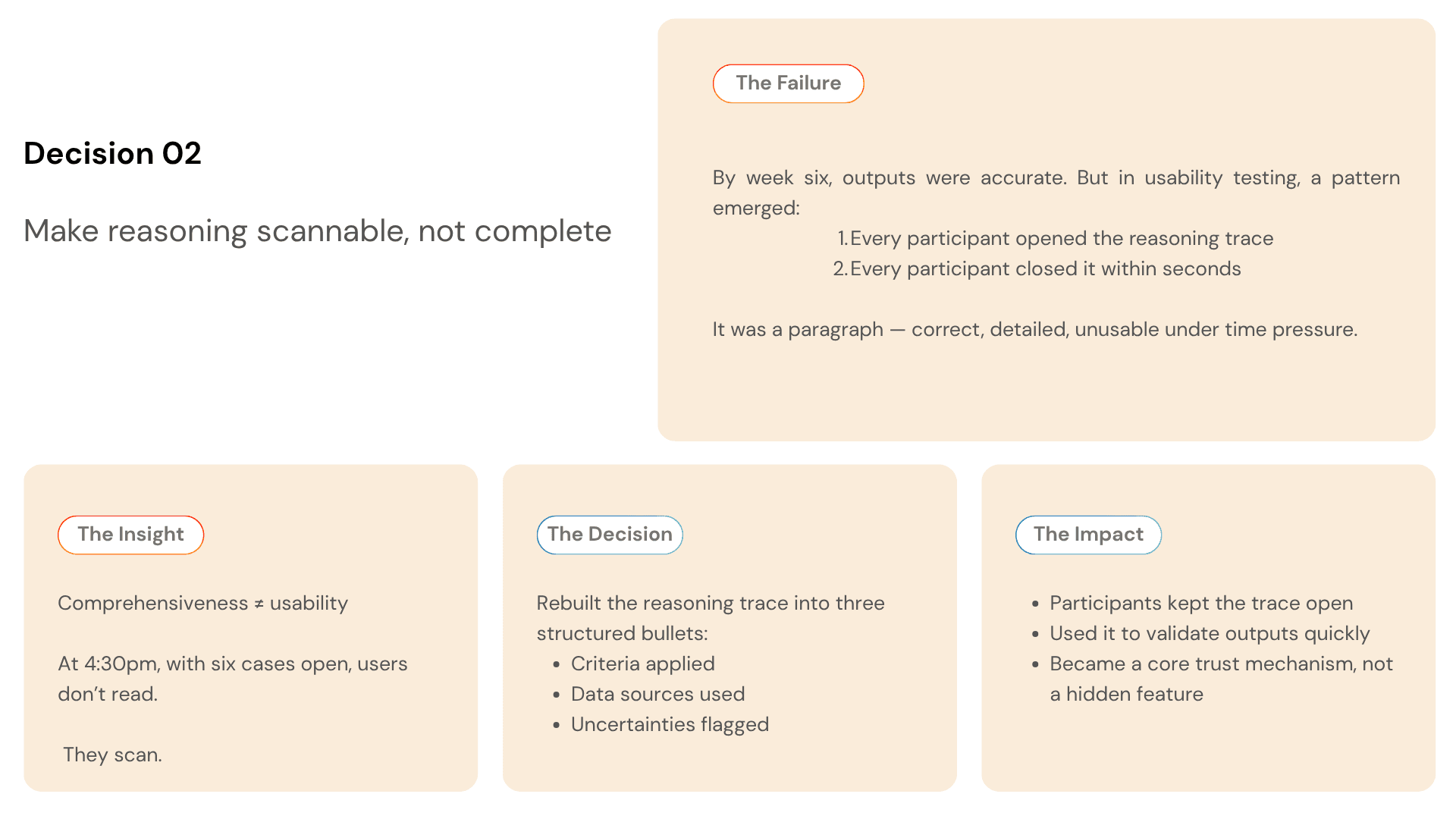

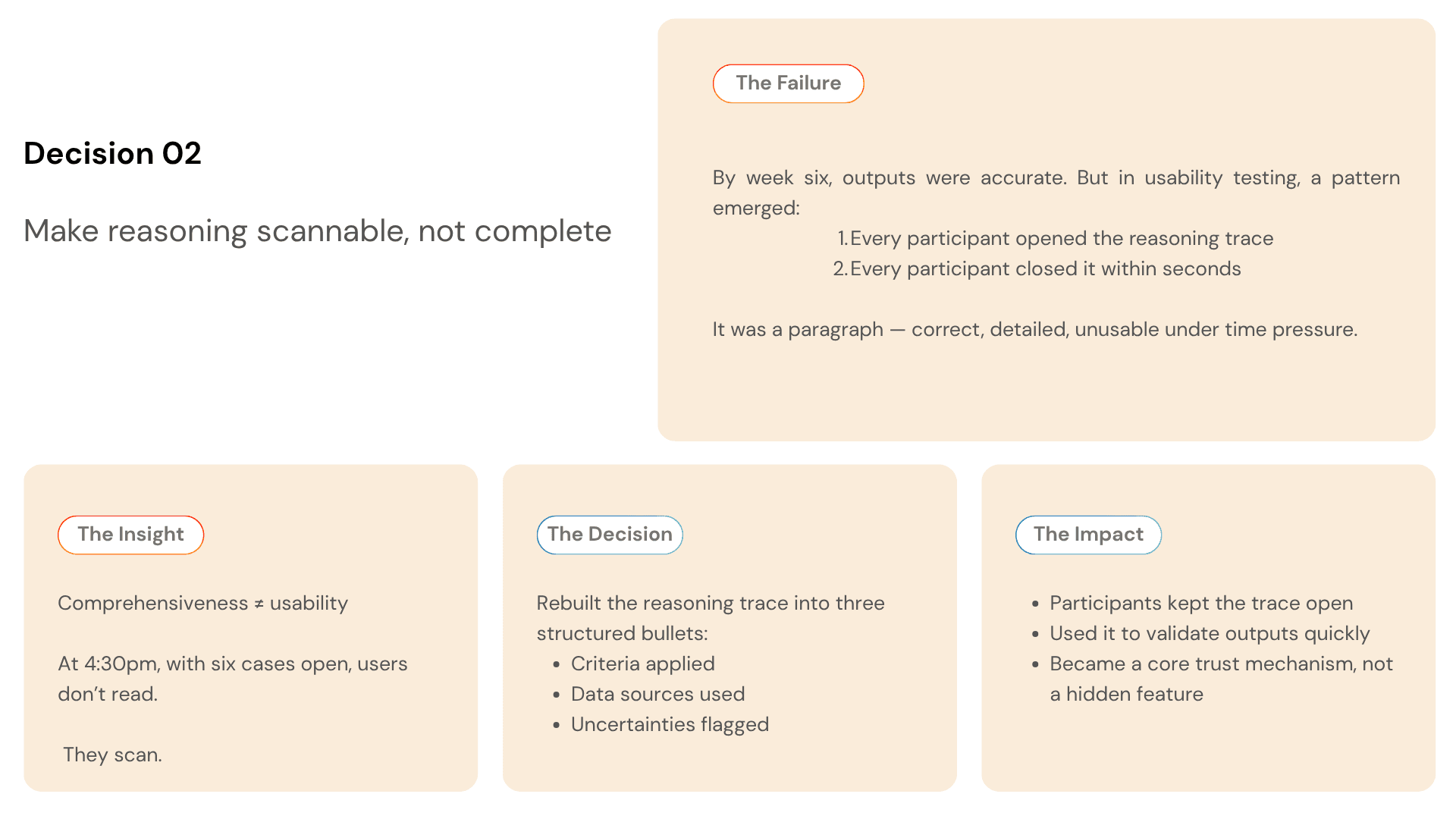

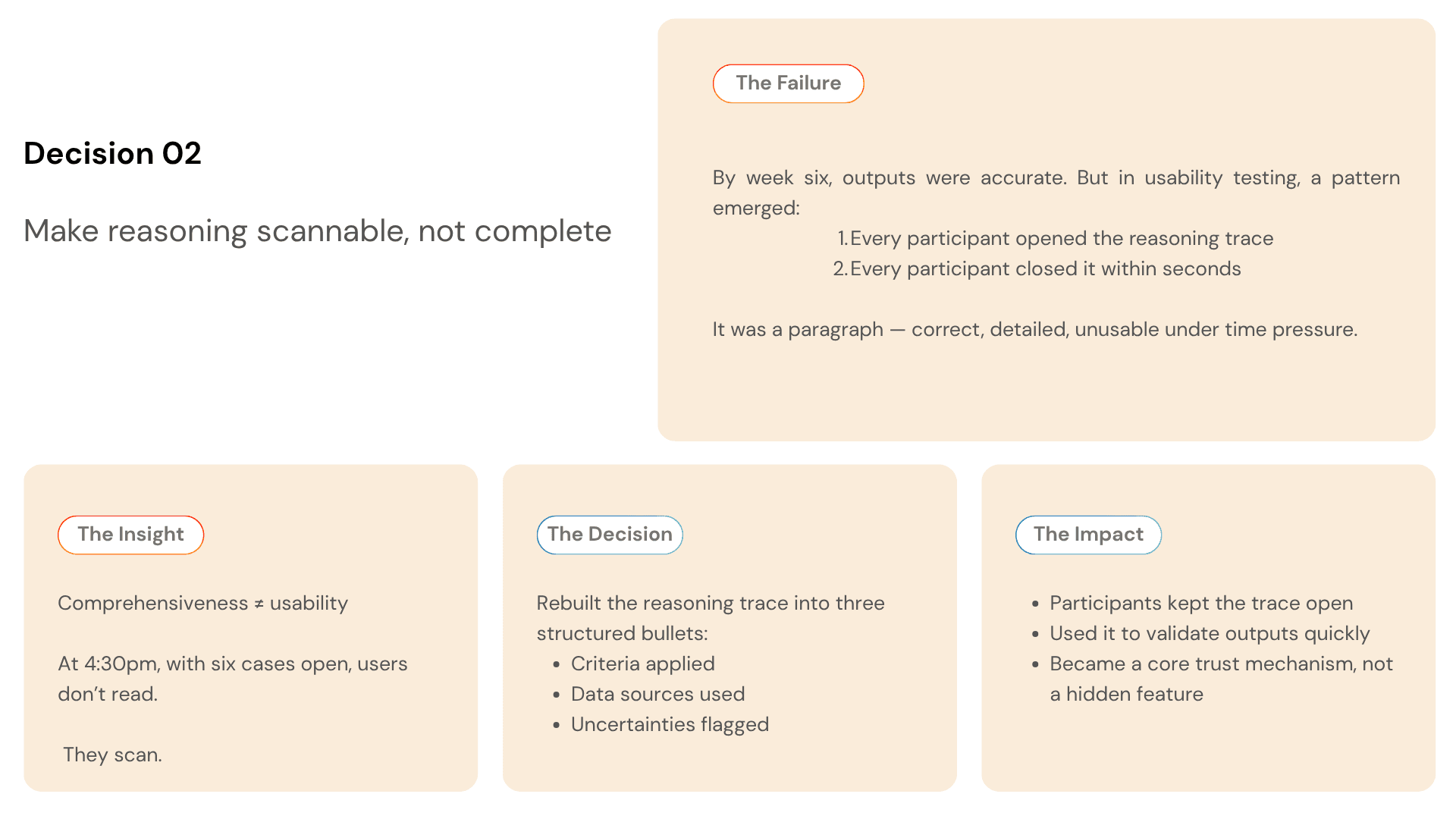

There is one behavioral moment that shaped the entire design. At 4:30pm, Jordan has six unfinished cases. He opens each one — not to work it, but to decide if it is safe to leave until tomorrow or needs to be pushed through before the payer's same-day cutoff.

He needs to evaluate an AI output in under 30 seconds.

If the reasoning takes longer than that, she closes it and goes with her gut.

Every design decision in this project was made with that 4:30pm moment in mind.

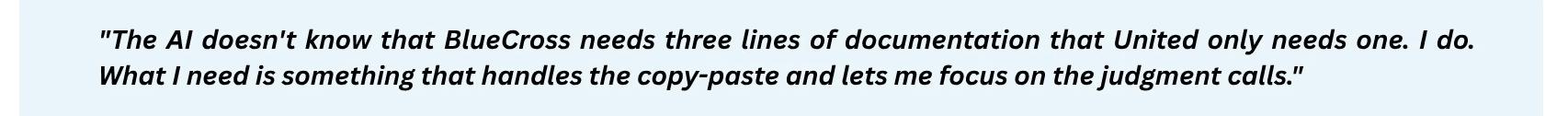

What the research actually revealed

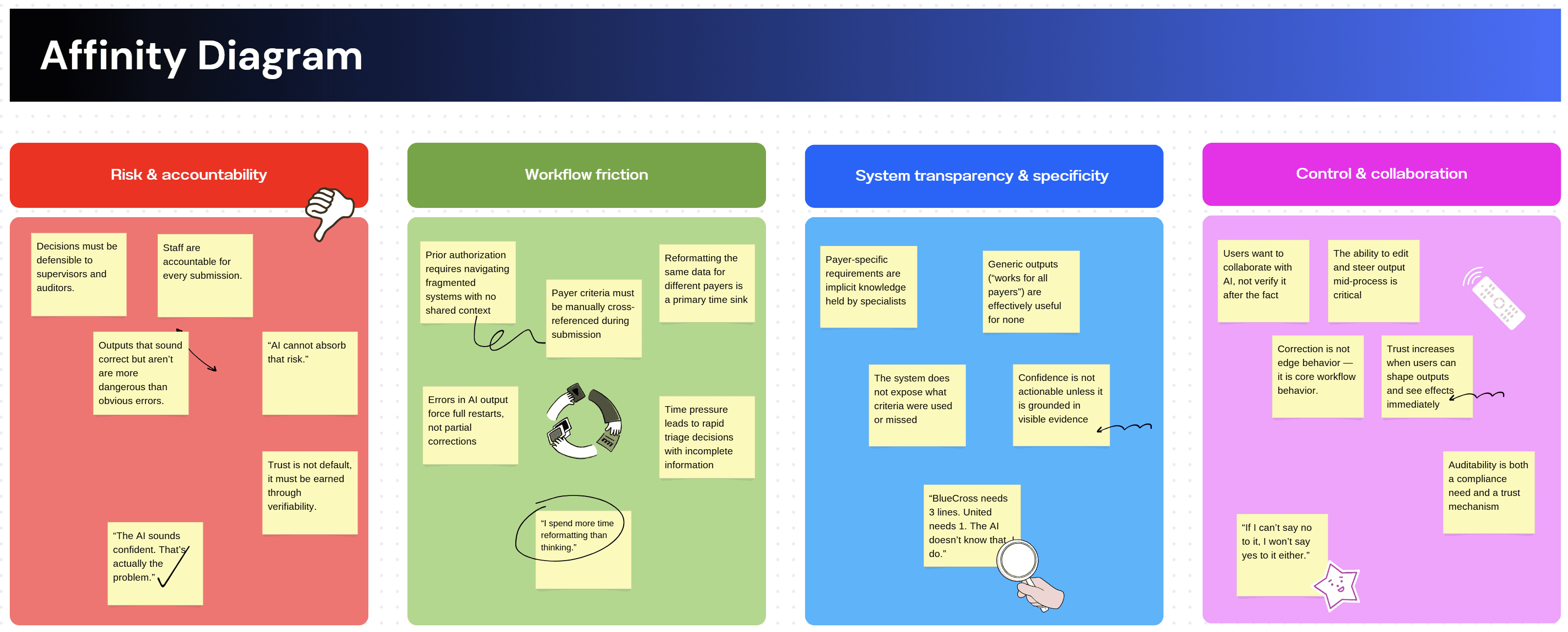

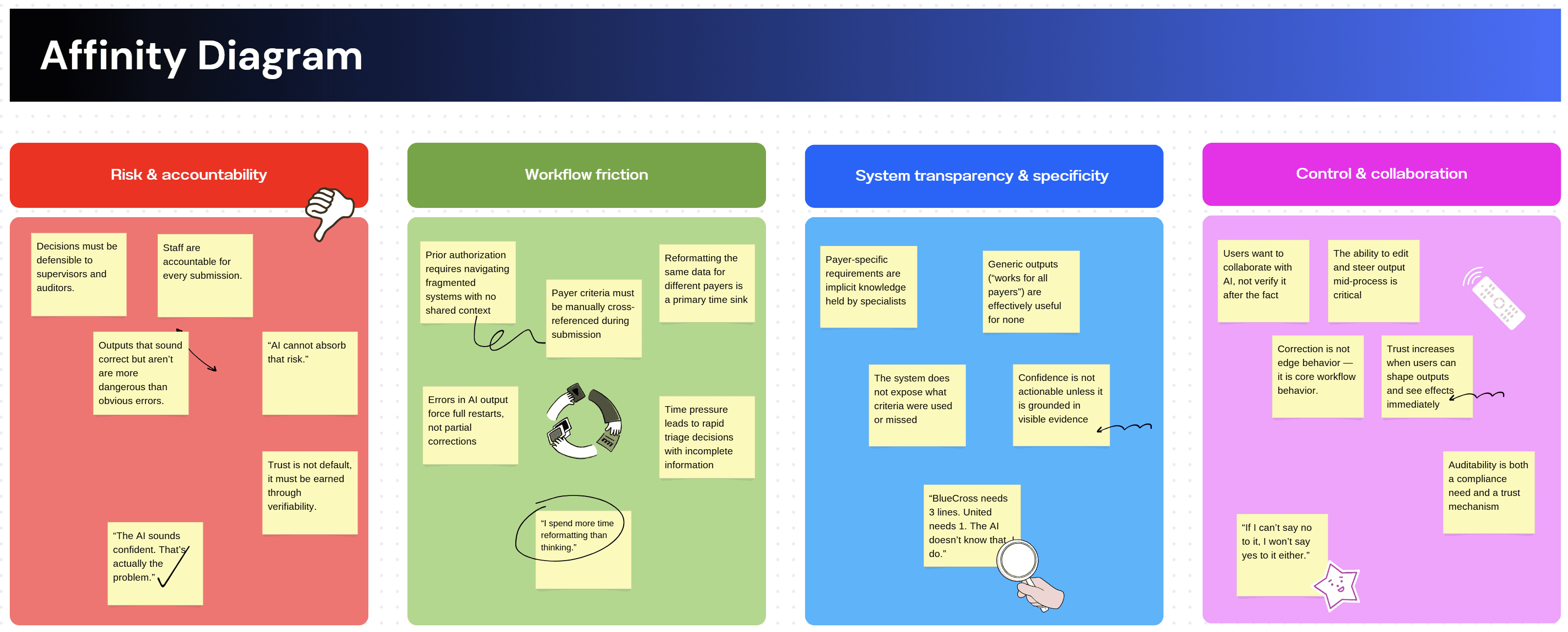

I ran three research activities before touching any UI. Each one was designed to answer a specific unknown — not to validate assumptions I already had.

Contextual inquiry: Shadowed 6 prior authorization specialists through complete prior auth cases. Watched every tab switch, every copy-paste, every moment of hesitation. Mapped the full 11-step workflow end to end and identified which steps were mechanical versus which required genuine clinical judgment.

Moderated usability testing: 8 participants completing identical prior auth tasks using the existing manual workflow across three systems. Think-aloud, screen recording, task timing. This established the baseline how long each step took, where errors occurred, and at which points specialists hesitated or stopped. No AI tool existed at this stage. The goal was to understand the workflow deeply enough to know exactly where LLM assistance could reduce load without introducing new failure modes.

Prompt audit: Reviewed 40 LLM outputs generated during a separate internal exploration of AI-assisted drafting. Tagged by error type missing criteria, wrong payer schema, hallucinated completeness and documented what specialists did next when they encountered each error type.

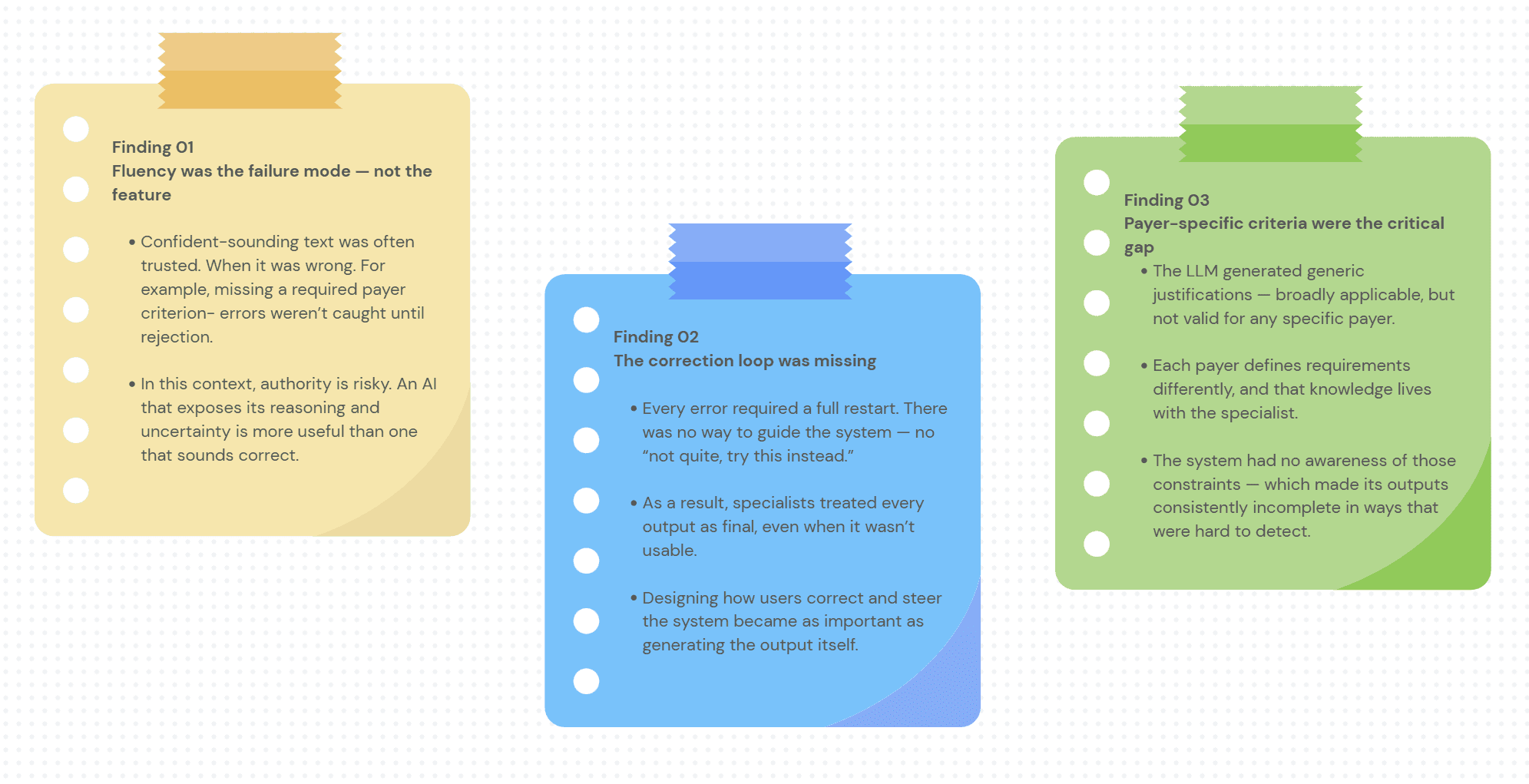

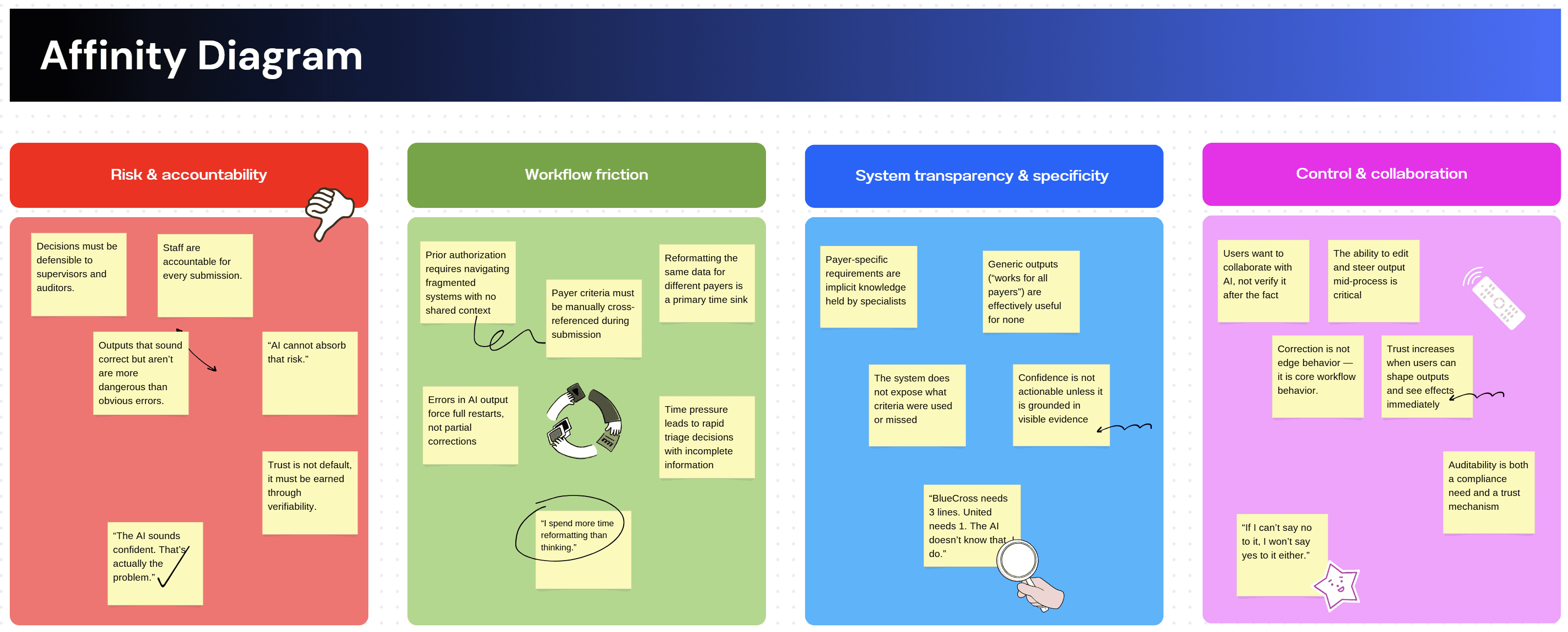

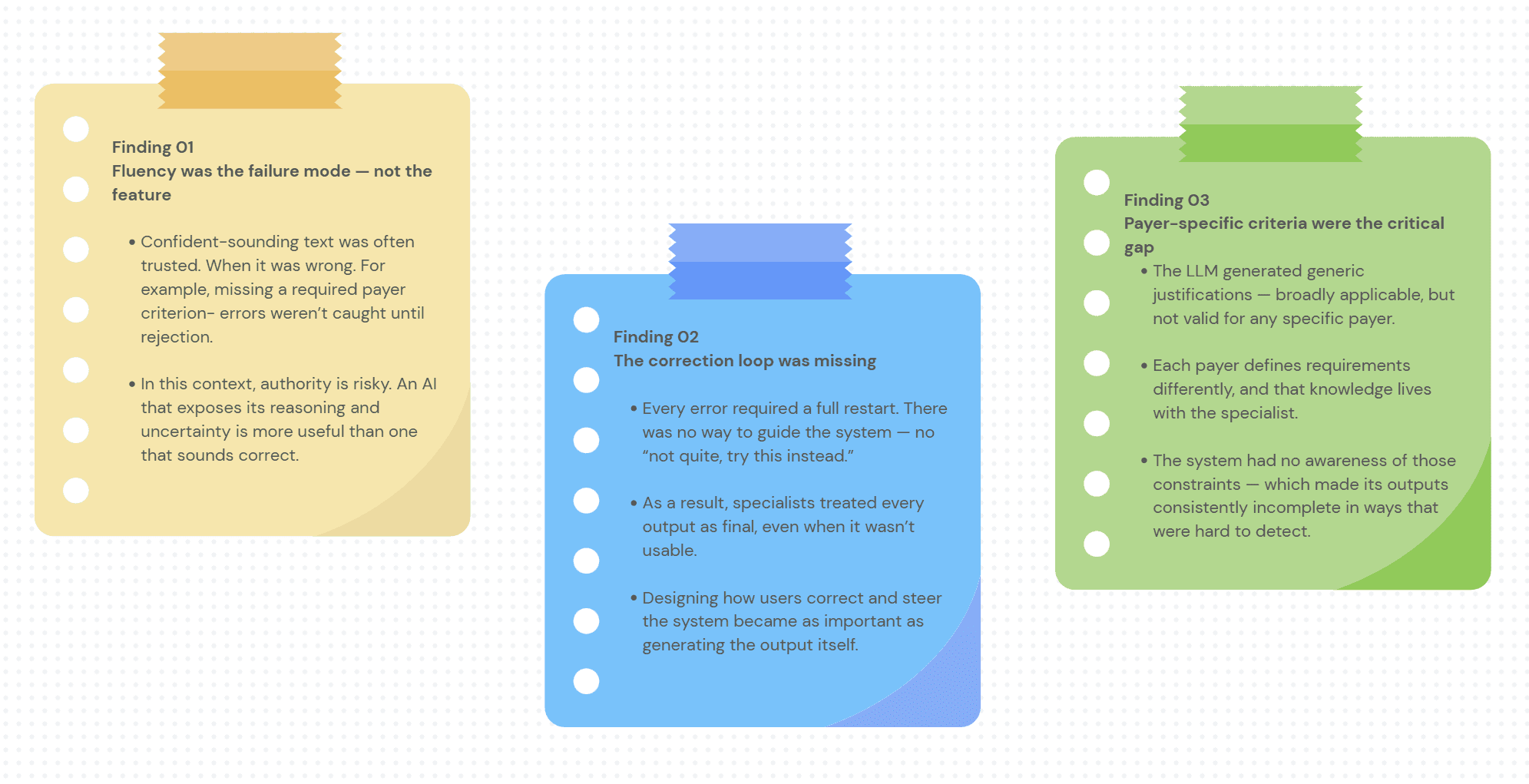

Three findings that changed the direction of the project

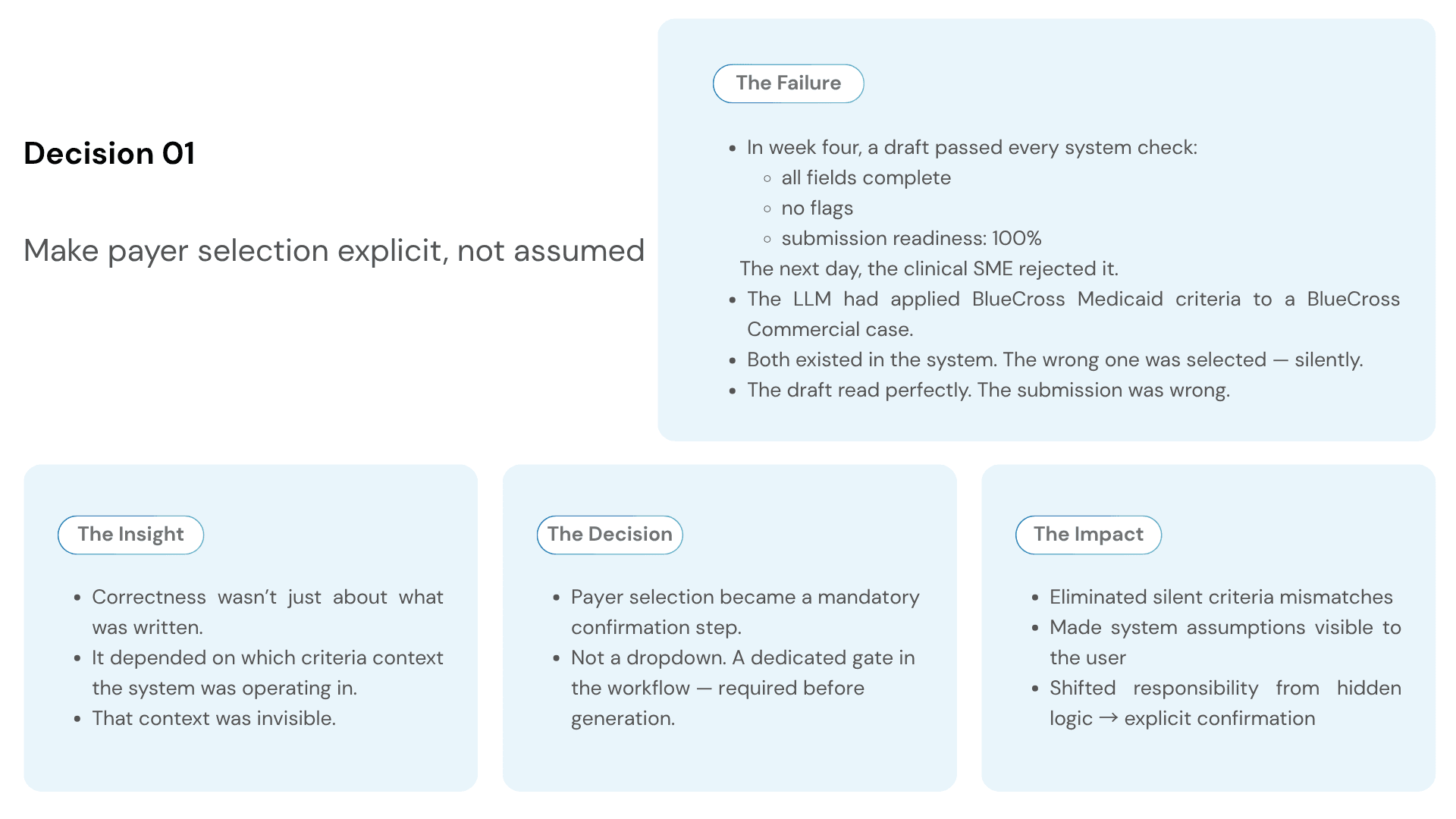

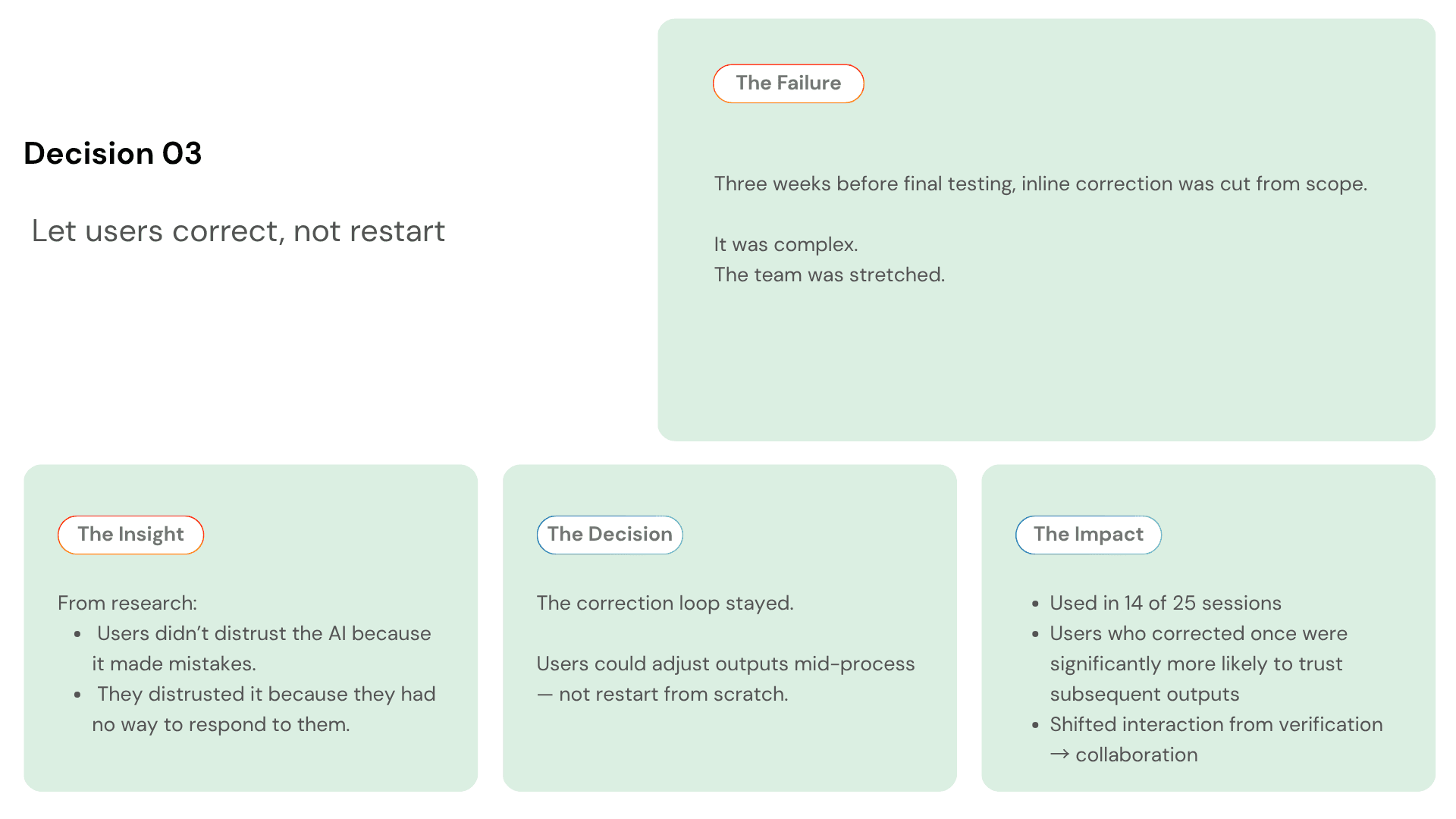

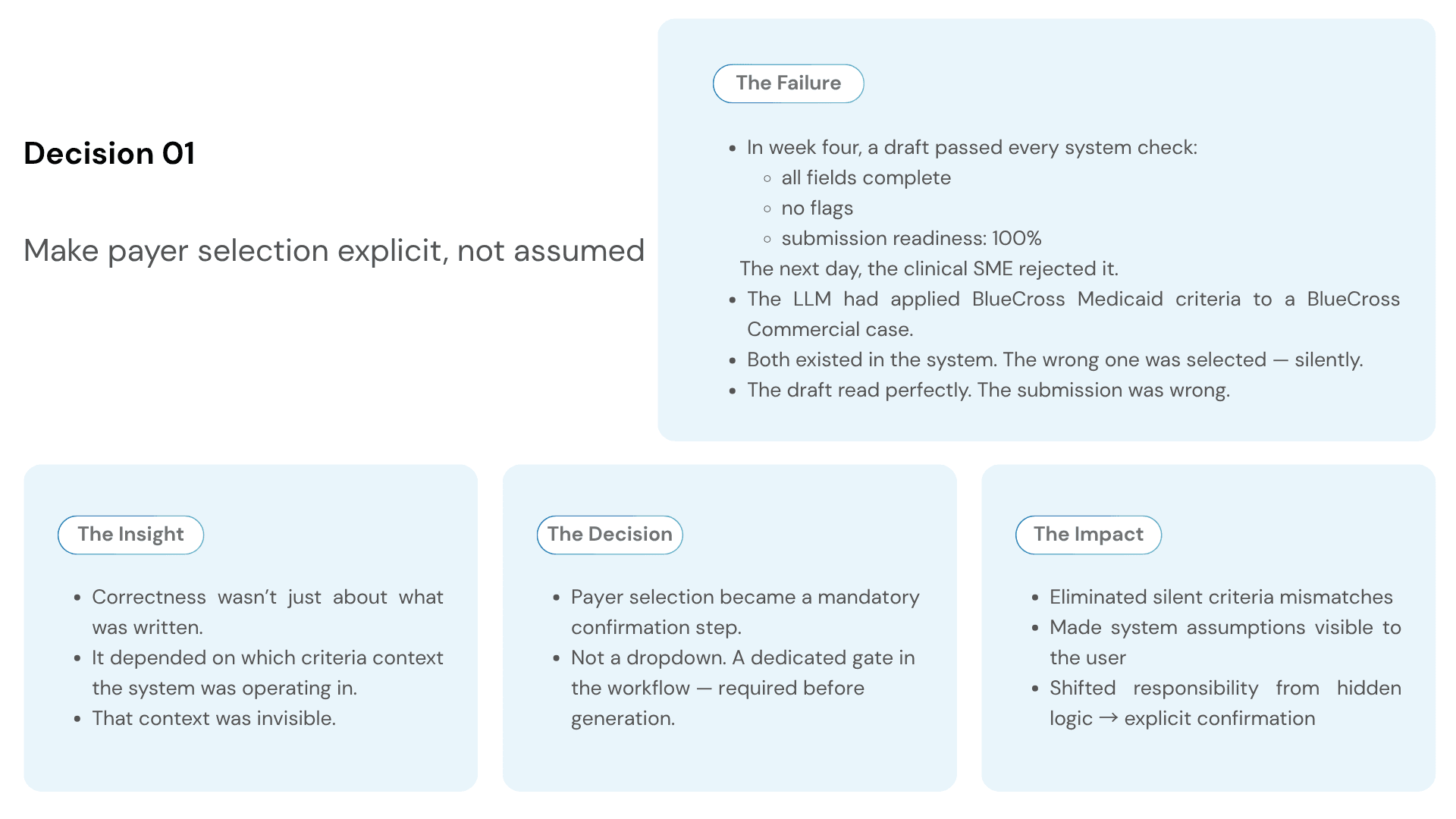

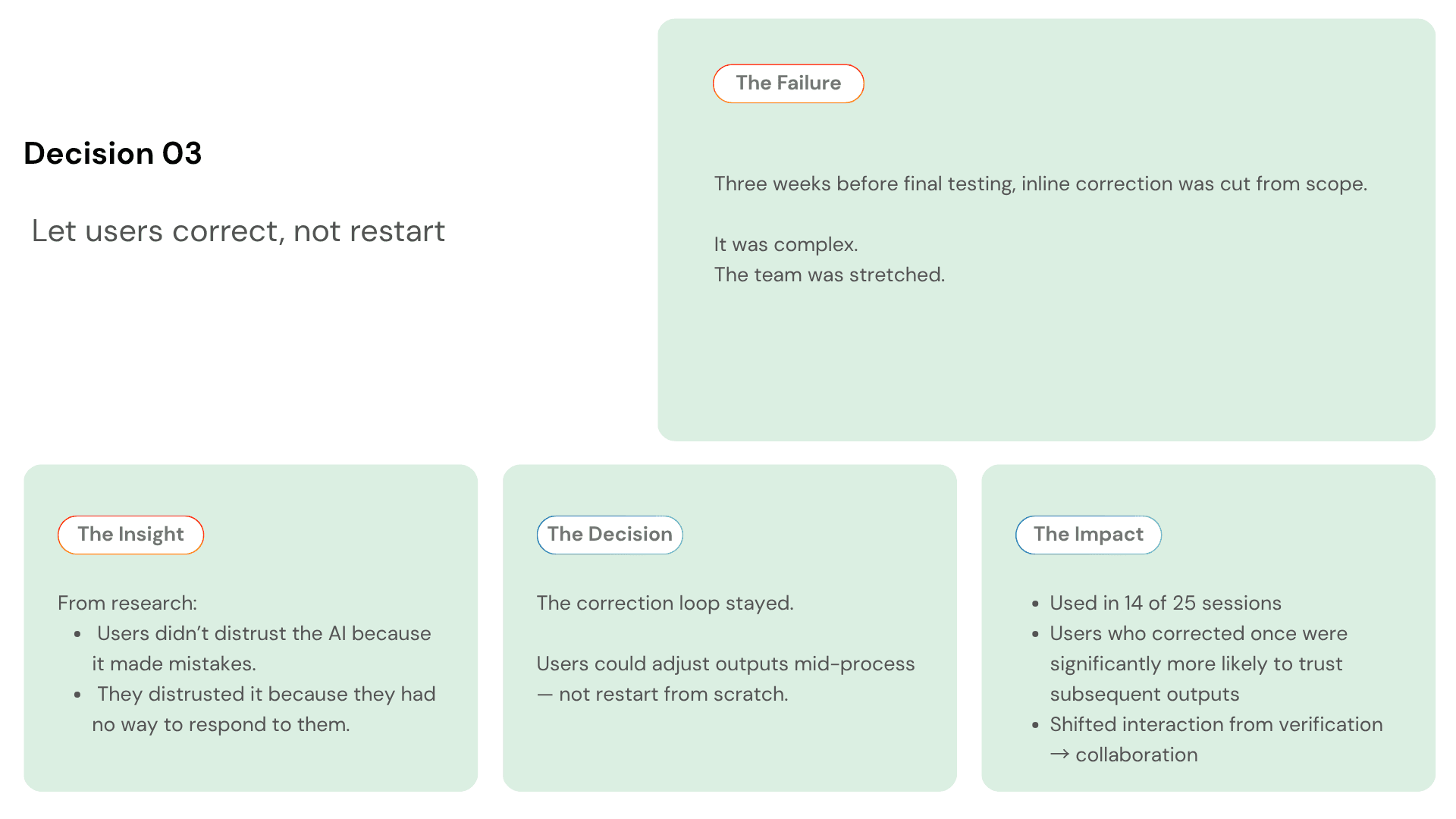

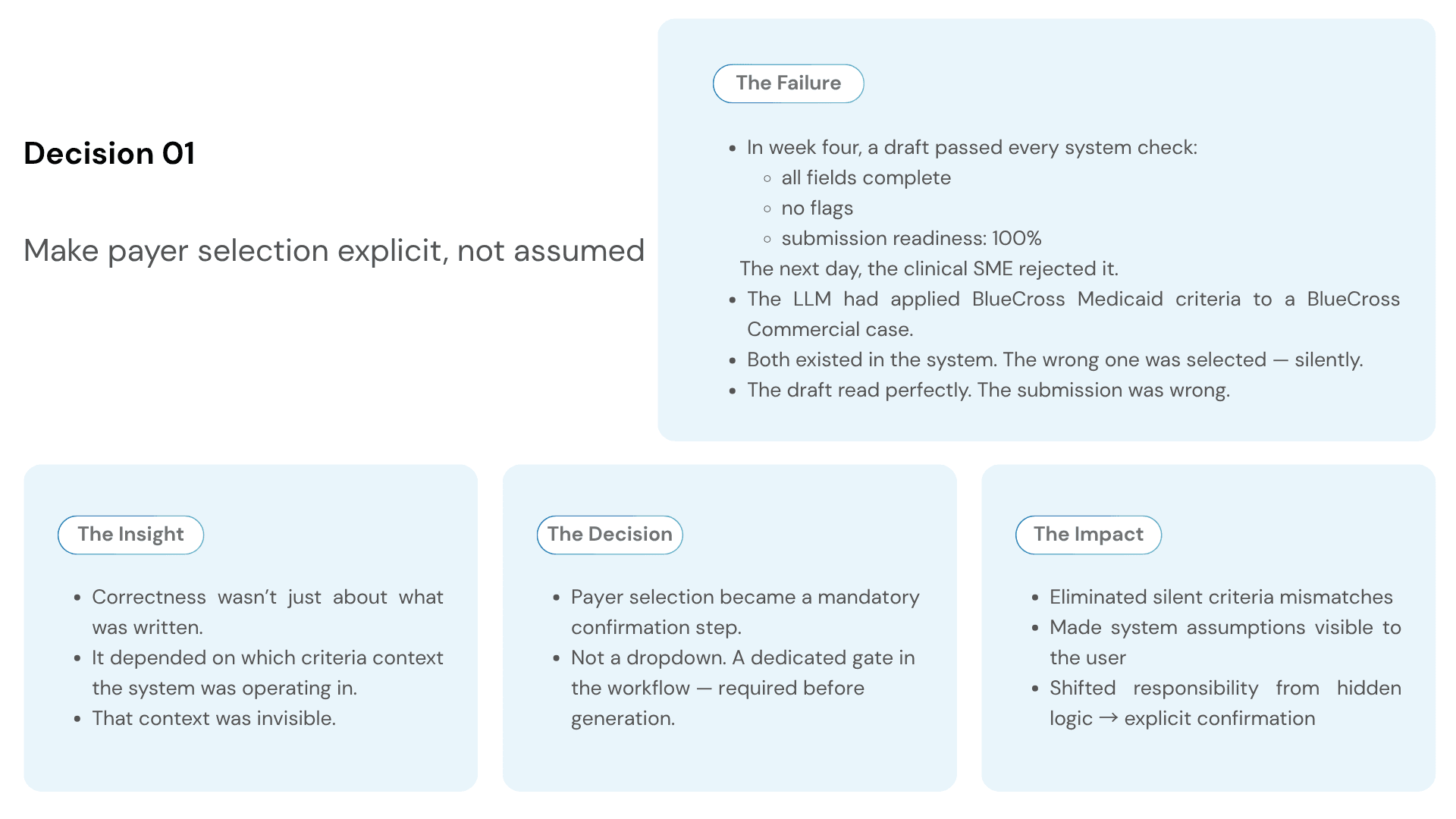

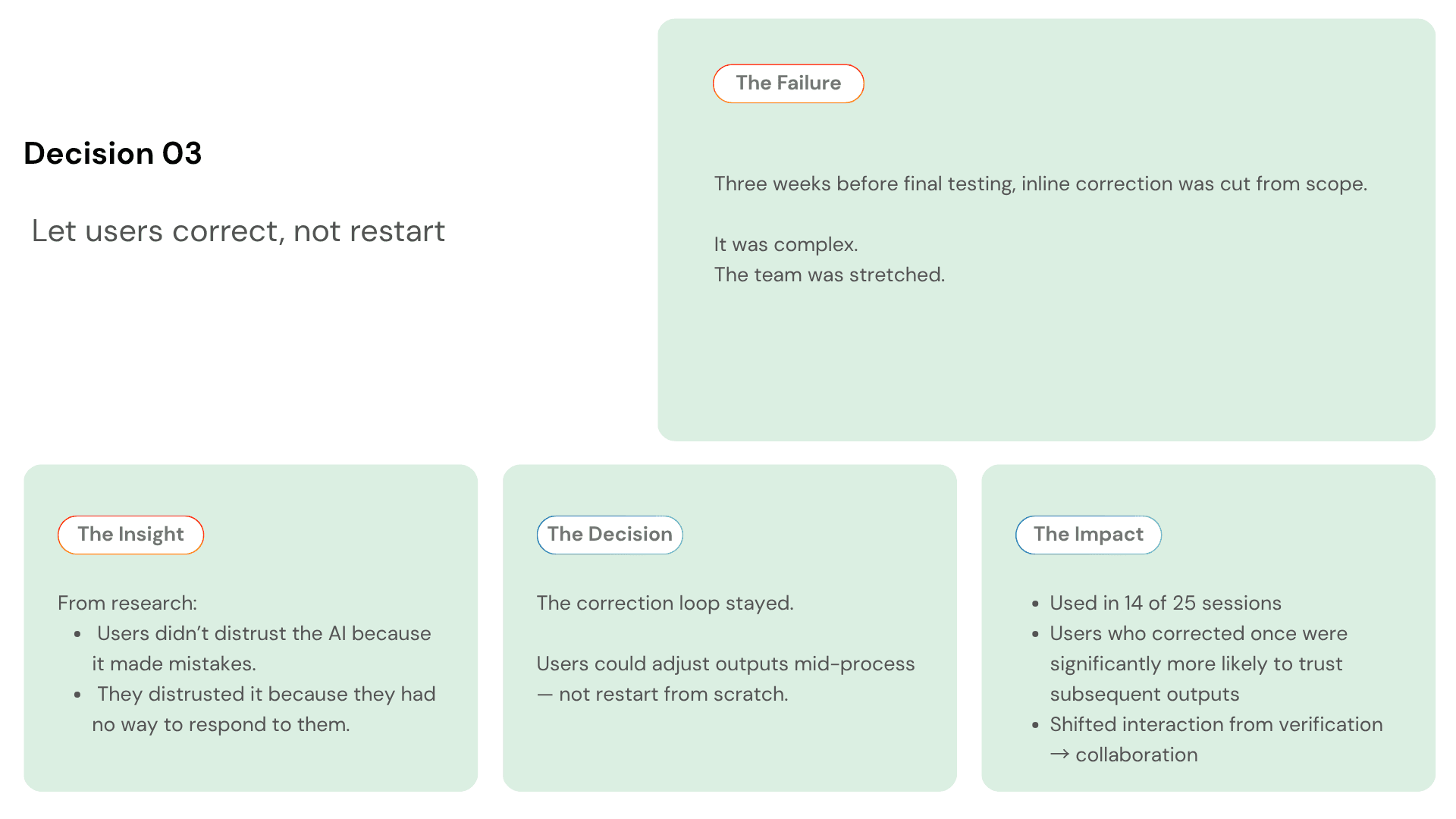

THREE DECISIONS THAT DEFINED THE PRODUCT

Every project has dozens of design decisions. These three changed what the product became.

Video Prototype

MANUAL WORKFLOW VS AI COPILOT — WHAT CHANGED

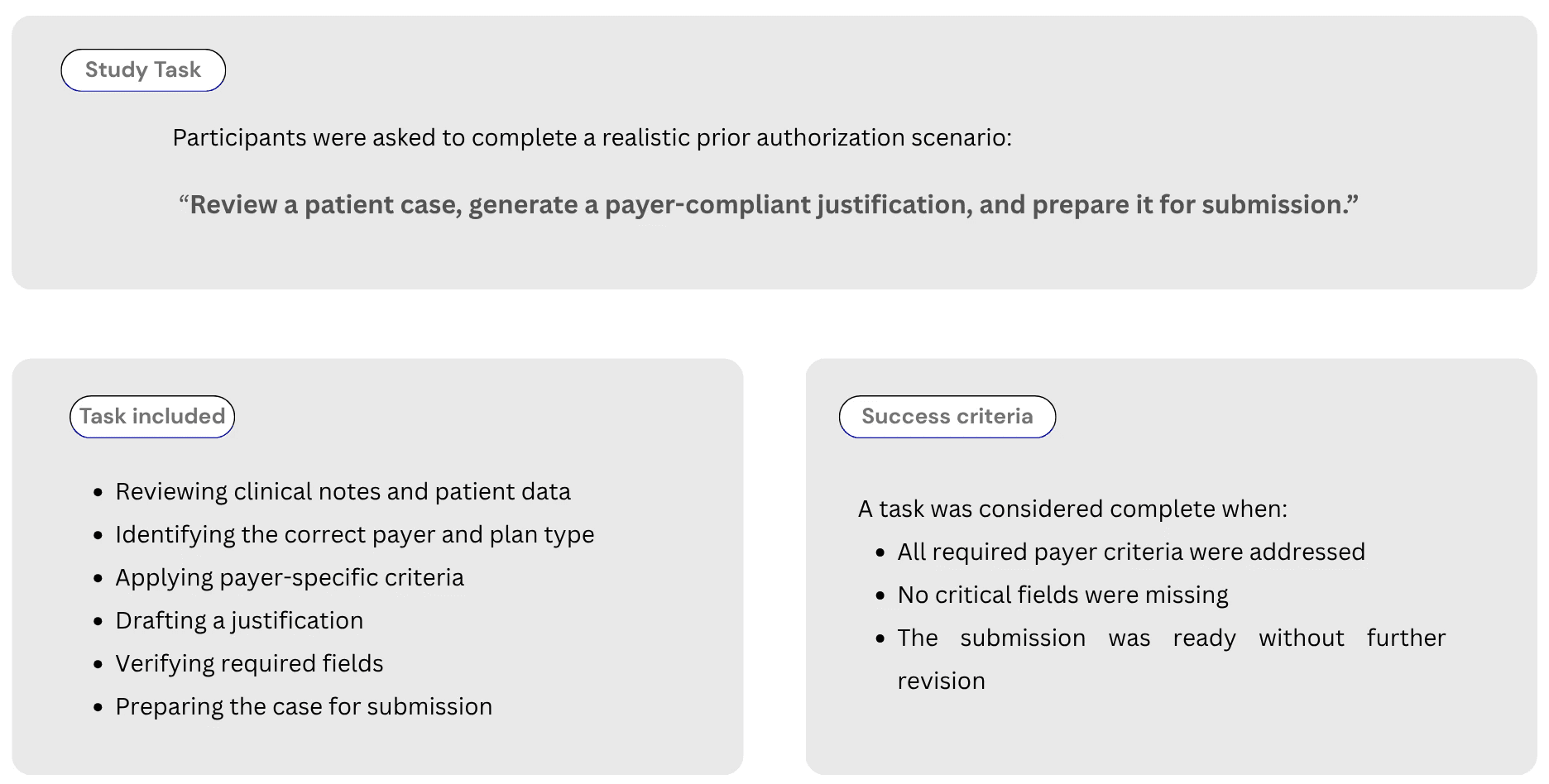

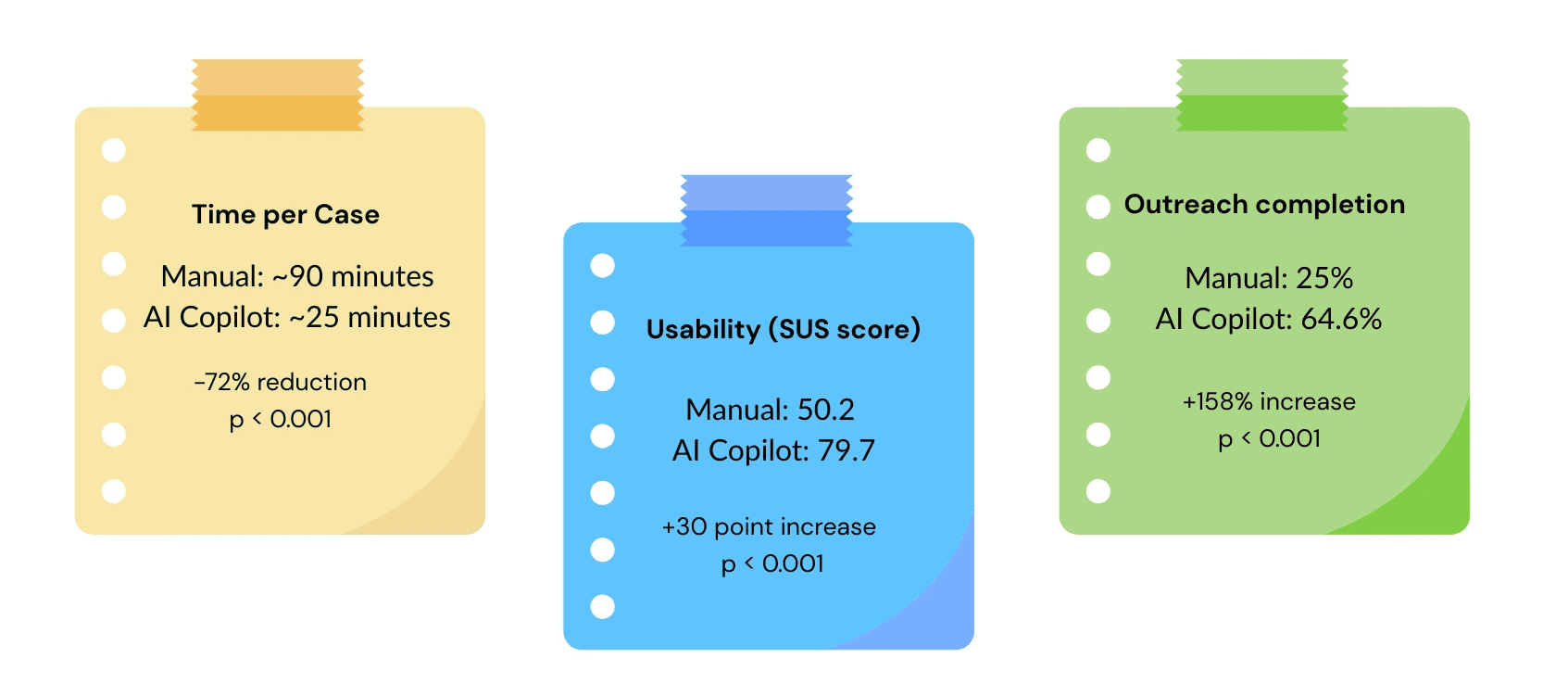

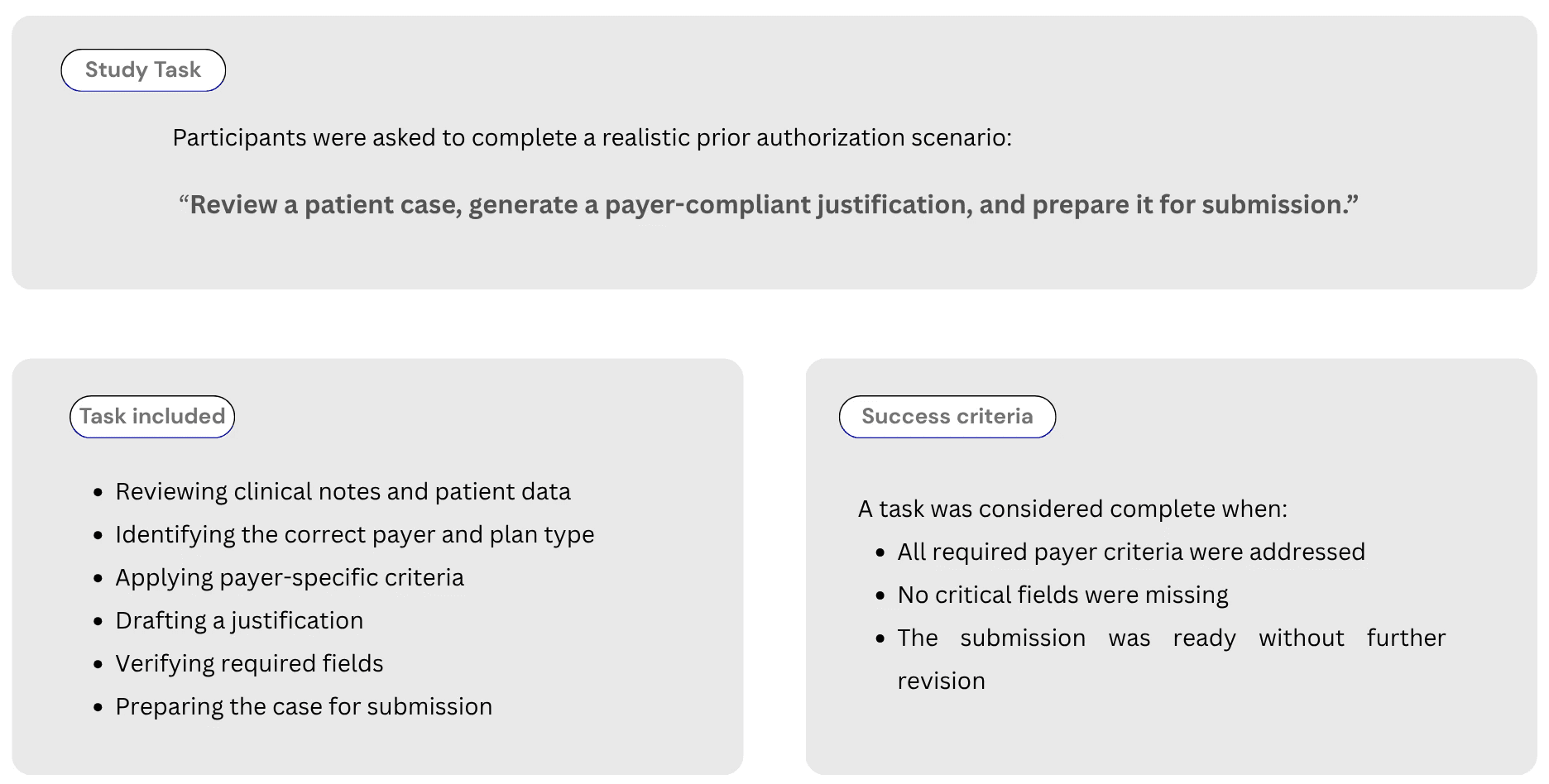

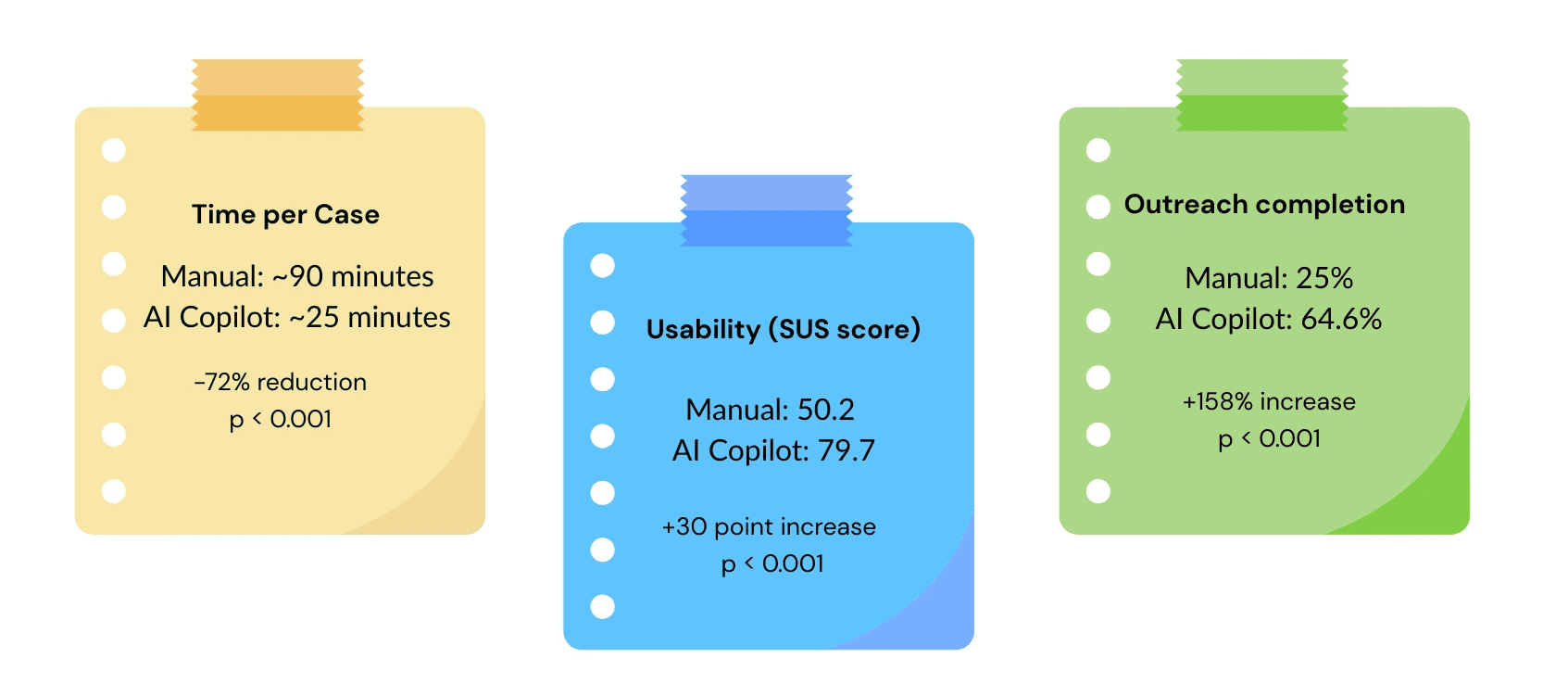

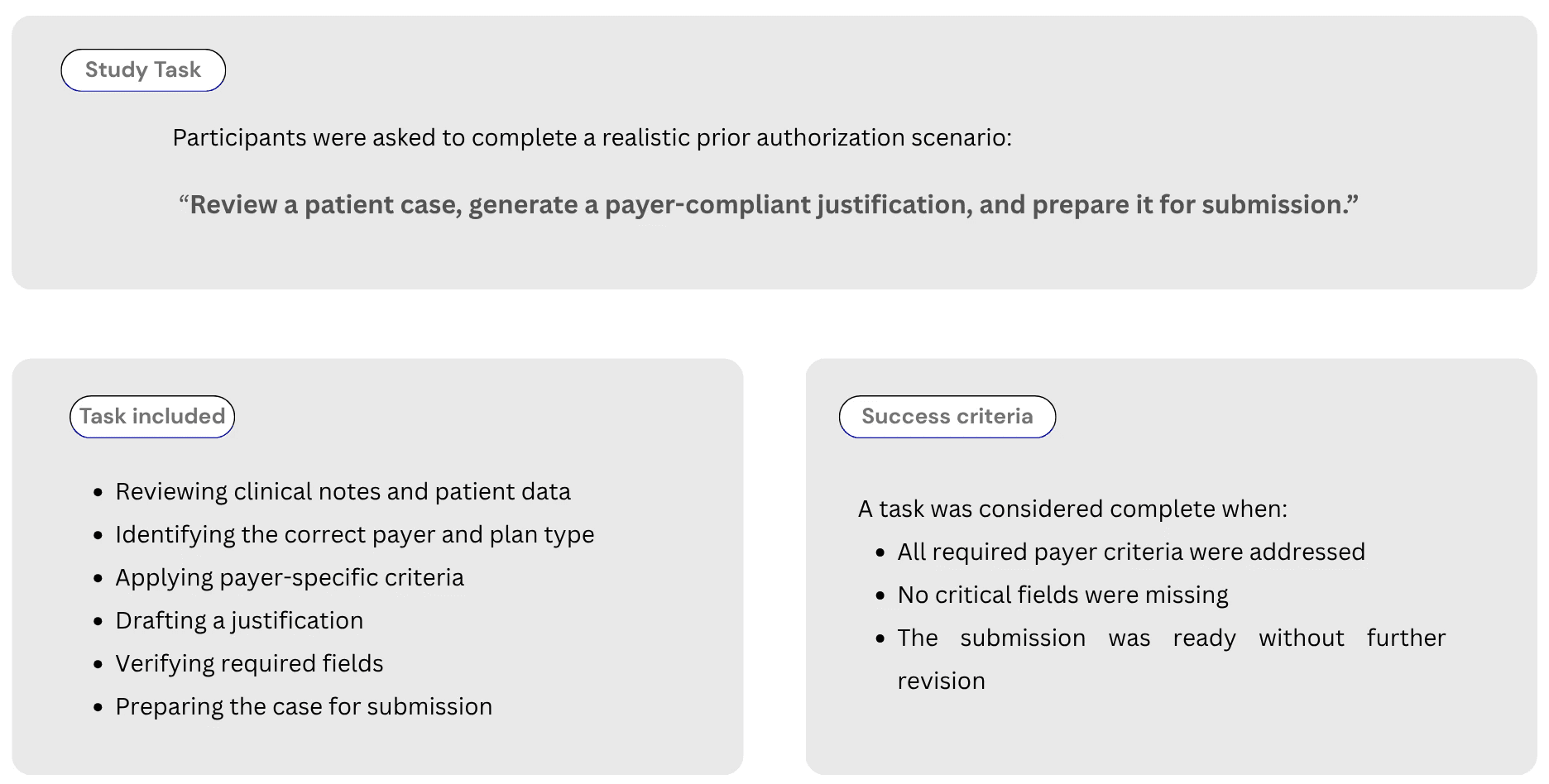

To evaluate the impact of the AI copilot, we ran a controlled usability study with 25 prior authorization specialists.

Each participant completed the same task twice:

once using the manual workflow

once using the AI copilot

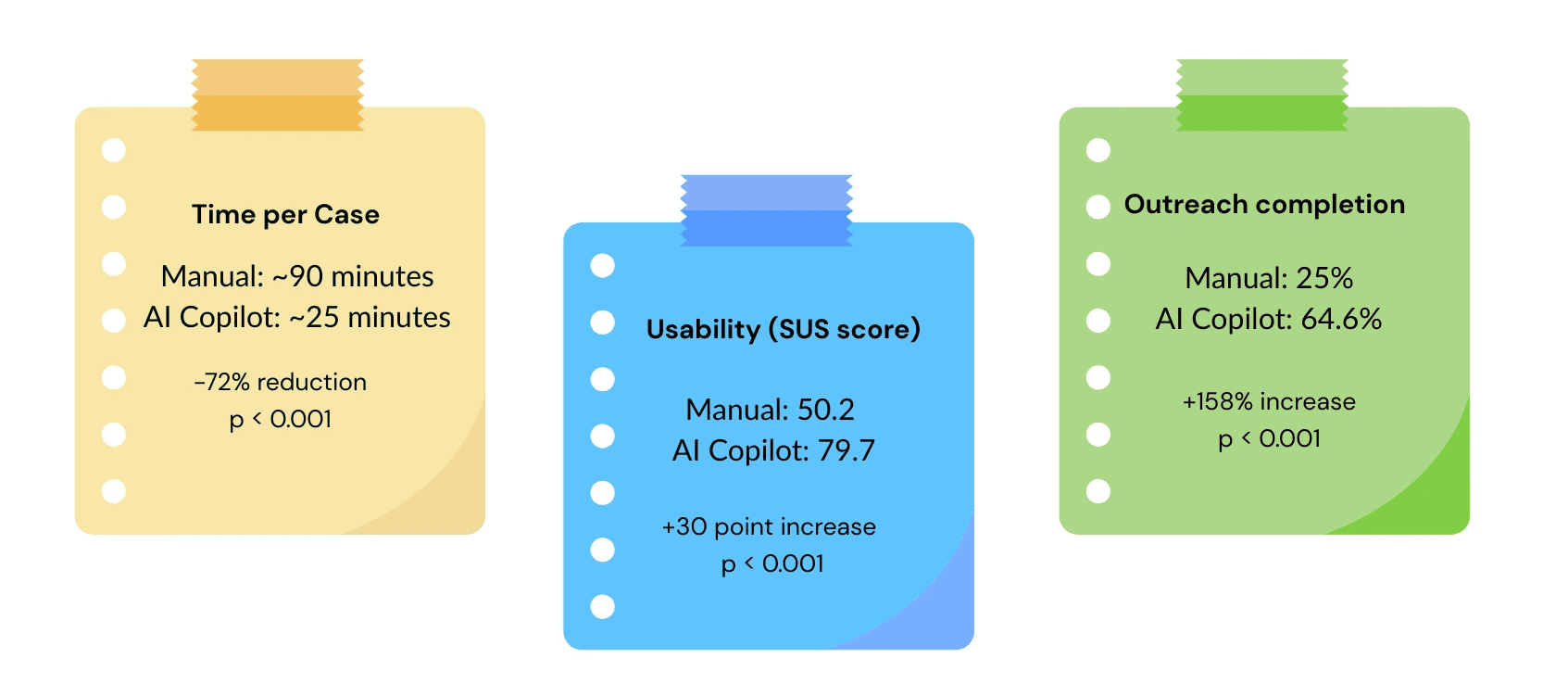

What Changed across all participants, performance improved on three key metrics.

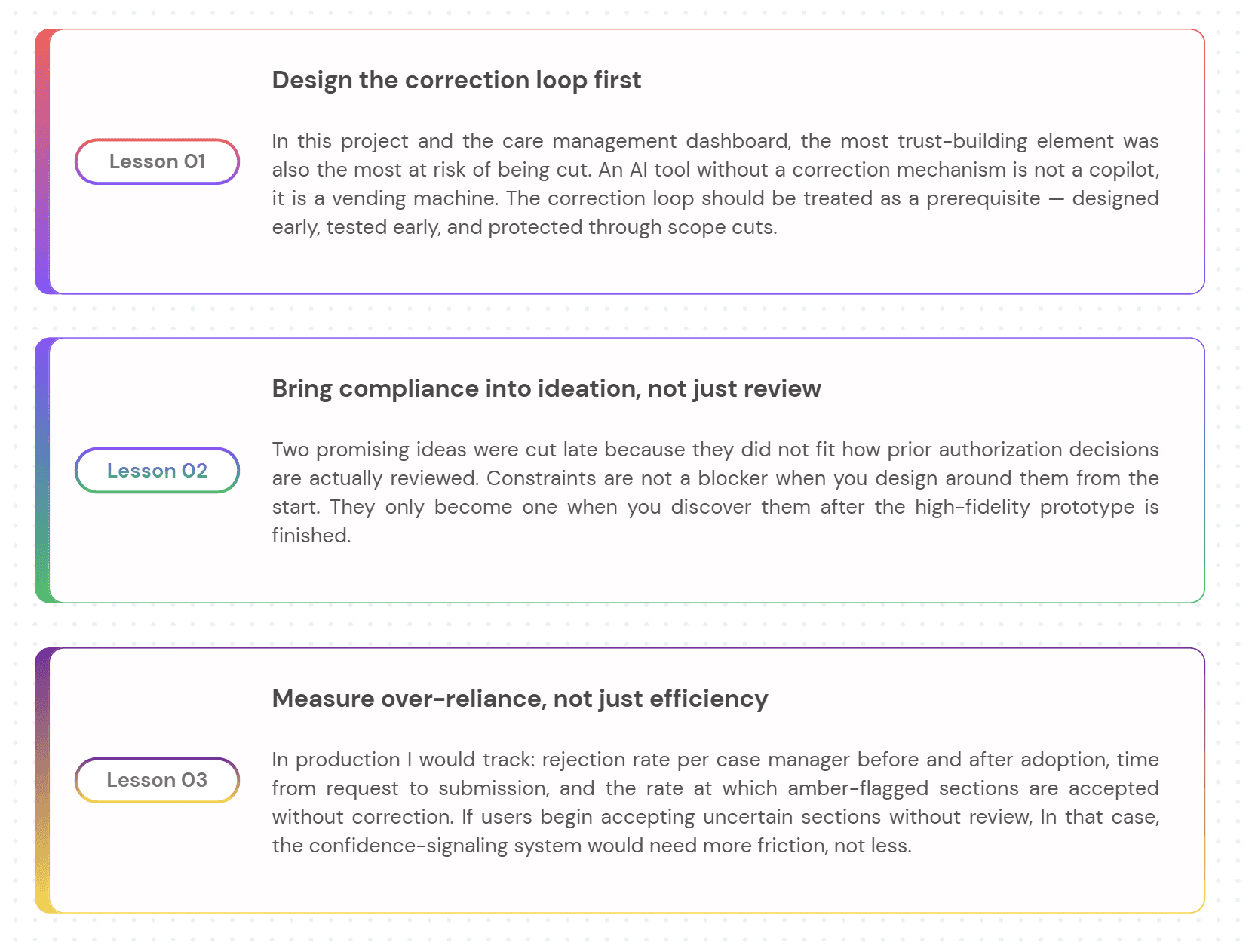

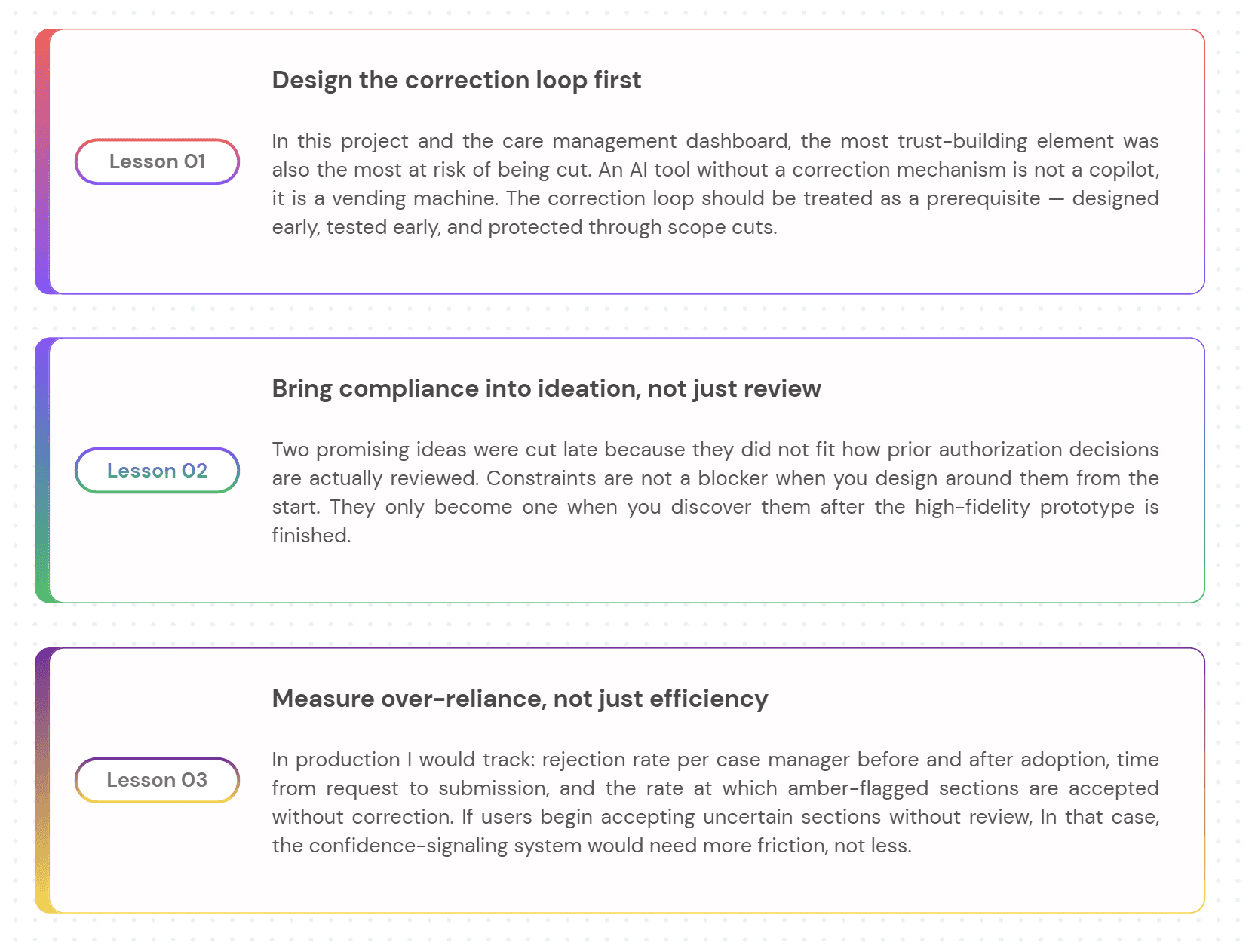

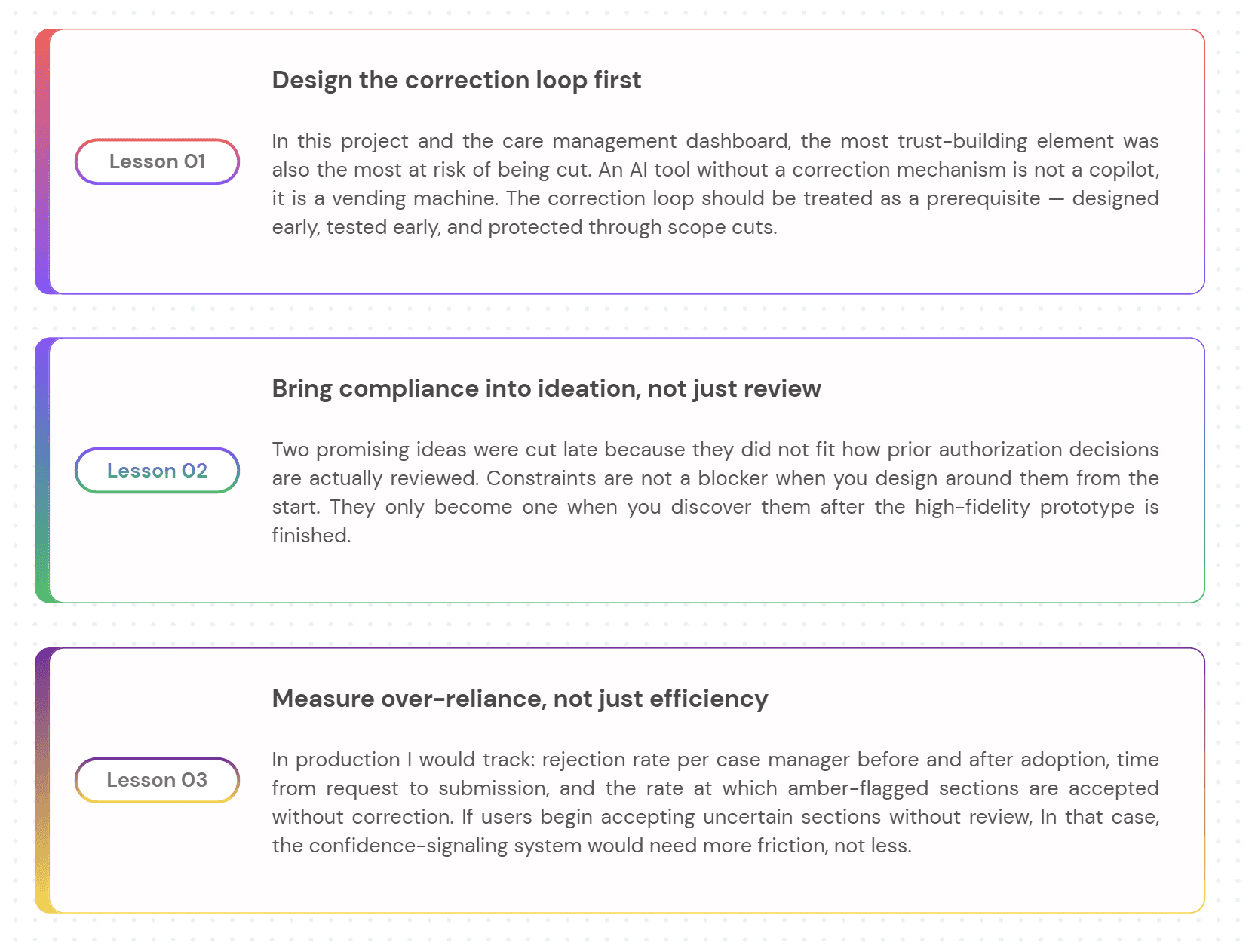

What I Learned

What I would do differently

Every project teaches you something you could not have known at the start. These are the three lessons I would carry into every AI product engagement from here.

More Projects

Agentic Workflow Design

Prior Authorization LLM Copilot

Concept project grounded in real clinical workflow experience

Year :

AI Product Designer

Industry :

Healthcare

Client :

Figma, Claude, Perplexity

Project Duration :

6 Weeks

The problem no one was talking about

A physician writes a prescription. For many treatments: biologics, specialty drugs, complex procedures, that’s not the end of the story. Before the patient can actually receive the therapy, the insurer needs to sign off. This step is called

Prior Authorization: A check to confirm the treatment meets clinical criteria.

On paper, it sounds reasonable. In practice, it rarely feels that way. Behind the scenes, clinical support staff prior authorization specialists, nurses, medical assistants take over. They pull information from the patient’s chart, interpret payer-specific guidelines, and re-enter the same details into different submission portals, each with its own format and expectations.

The work is repetitive, but the stakes are high. Miss one required detail, and the request gets denied. Again start over.Every payer defines “complete” differently. And for higher-cost therapies, a single submission can take anywhere from 30 minutes to well over an hour.

The real bottleneck isn’t documentation. It’s translation.

Translating clinical data into payer-specific requirements. Mapping chart notes to structured fields. Framing a justification in a way that aligns with how each payer evaluates requests.

That’s where time disappears. And where small, costly errors creep in.

When an LLM was introduced to help, it seemed promising at first. It produced clean, confident summaries of the patient’s case. But it often missed payer-specific requirements. And because the output sounded complete, those gaps were hard to spot. Staff couldn’t rely on it, so they checked everything, line by line.

The AI didn’t remove work. It added another layer of review.

Which led to a more fundamental question:

What does trustworthy LLM assistance look like in a workflow where completeness and correctness aren’t optional?

The people doing the work

Before any design happened, I needed to understand who actually lived inside this workflow every day.

There is one behavioral moment that shaped the entire design. At 4:30pm, Jordan has six unfinished cases. He opens each one — not to work it, but to decide if it is safe to leave until tomorrow or needs to be pushed through before the payer's same-day cutoff.

He needs to evaluate an AI output in under 30 seconds.

If the reasoning takes longer than that, she closes it and goes with her gut.

Every design decision in this project was made with that 4:30pm moment in mind.

What the research actually revealed

I ran three research activities before touching any UI. Each one was designed to answer a specific unknown — not to validate assumptions I already had.

Contextual inquiry: Shadowed 6 prior authorization specialists through complete prior auth cases. Watched every tab switch, every copy-paste, every moment of hesitation. Mapped the full 11-step workflow end to end and identified which steps were mechanical versus which required genuine clinical judgment.

Moderated usability testing: 8 participants completing identical prior auth tasks using the existing manual workflow across three systems. Think-aloud, screen recording, task timing. This established the baseline how long each step took, where errors occurred, and at which points specialists hesitated or stopped. No AI tool existed at this stage. The goal was to understand the workflow deeply enough to know exactly where LLM assistance could reduce load without introducing new failure modes.

Prompt audit: Reviewed 40 LLM outputs generated during a separate internal exploration of AI-assisted drafting. Tagged by error type missing criteria, wrong payer schema, hallucinated completeness and documented what specialists did next when they encountered each error type.

Three findings that changed the direction of the project

THREE DECISIONS THAT DEFINED THE PRODUCT

Every project has dozens of design decisions. These three changed what the product became.

Video Prototype

MANUAL WORKFLOW VS AI COPILOT — WHAT CHANGED

To evaluate the impact of the AI copilot, we ran a controlled usability study with 25 prior authorization specialists.

Each participant completed the same task twice:

once using the manual workflow

once using the AI copilot

What Changed across all participants, performance improved on three key metrics.

What I Learned

What I would do differently

Every project teaches you something you could not have known at the start. These are the three lessons I would carry into every AI product engagement from here.

More Projects

Agentic Workflow Design

Prior Authorization LLM Copilot

Concept project grounded in real clinical workflow experience

Year :

AI Product Designer

Industry :

Healthcare

Client :

Figma, Claude, Perplexity

Project Duration :

6 Weeks

The problem no one was talking about

A physician writes a prescription. For many treatments: biologics, specialty drugs, complex procedures, that’s not the end of the story. Before the patient can actually receive the therapy, the insurer needs to sign off. This step is called

Prior Authorization: A check to confirm the treatment meets clinical criteria.

On paper, it sounds reasonable. In practice, it rarely feels that way. Behind the scenes, clinical support staff prior authorization specialists, nurses, medical assistants take over. They pull information from the patient’s chart, interpret payer-specific guidelines, and re-enter the same details into different submission portals, each with its own format and expectations.

The work is repetitive, but the stakes are high. Miss one required detail, and the request gets denied. Again start over.Every payer defines “complete” differently. And for higher-cost therapies, a single submission can take anywhere from 30 minutes to well over an hour.

The real bottleneck isn’t documentation. It’s translation.

Translating clinical data into payer-specific requirements. Mapping chart notes to structured fields. Framing a justification in a way that aligns with how each payer evaluates requests.

That’s where time disappears. And where small, costly errors creep in.

When an LLM was introduced to help, it seemed promising at first. It produced clean, confident summaries of the patient’s case. But it often missed payer-specific requirements. And because the output sounded complete, those gaps were hard to spot. Staff couldn’t rely on it, so they checked everything, line by line.

The AI didn’t remove work. It added another layer of review.

Which led to a more fundamental question:

What does trustworthy LLM assistance look like in a workflow where completeness and correctness aren’t optional?

The people doing the work

Before any design happened, I needed to understand who actually lived inside this workflow every day.

There is one behavioral moment that shaped the entire design. At 4:30pm, Jordan has six unfinished cases. He opens each one — not to work it, but to decide if it is safe to leave until tomorrow or needs to be pushed through before the payer's same-day cutoff.

He needs to evaluate an AI output in under 30 seconds.

If the reasoning takes longer than that, she closes it and goes with her gut.

Every design decision in this project was made with that 4:30pm moment in mind.

What the research actually revealed

I ran three research activities before touching any UI. Each one was designed to answer a specific unknown — not to validate assumptions I already had.

Contextual inquiry: Shadowed 6 prior authorization specialists through complete prior auth cases. Watched every tab switch, every copy-paste, every moment of hesitation. Mapped the full 11-step workflow end to end and identified which steps were mechanical versus which required genuine clinical judgment.

Moderated usability testing: 8 participants completing identical prior auth tasks using the existing manual workflow across three systems. Think-aloud, screen recording, task timing. This established the baseline how long each step took, where errors occurred, and at which points specialists hesitated or stopped. No AI tool existed at this stage. The goal was to understand the workflow deeply enough to know exactly where LLM assistance could reduce load without introducing new failure modes.

Prompt audit: Reviewed 40 LLM outputs generated during a separate internal exploration of AI-assisted drafting. Tagged by error type missing criteria, wrong payer schema, hallucinated completeness and documented what specialists did next when they encountered each error type.

Three findings that changed the direction of the project

THREE DECISIONS THAT DEFINED THE PRODUCT

Every project has dozens of design decisions. These three changed what the product became.

Video Prototype

MANUAL WORKFLOW VS AI COPILOT — WHAT CHANGED

To evaluate the impact of the AI copilot, we ran a controlled usability study with 25 prior authorization specialists.

Each participant completed the same task twice:

once using the manual workflow

once using the AI copilot

What Changed across all participants, performance improved on three key metrics.

What I Learned

What I would do differently

Every project teaches you something you could not have known at the start. These are the three lessons I would carry into every AI product engagement from here.